Deadlines Make You Dumb

How pressure narrows your thinking at exactly the wrong moment

TL;DR: The Yerkes-Dodson law shows that pressure only helps up to a point. After that, stress narrows your thinking, makes risks feel inconvenient, and pushes teams toward the fastest answer instead of the right one.

You have launched under pressure. You know the feeling. The deadline is fixed, the client is waiting, and someone flags a problem at the worst possible moment. Something is off. An edge case nobody handled. You have two choices: delay and face the fallout, or ship and deal with it later. Under pressure, people often ship not because they thought it through, but because the deadline did the deciding for them. That is what stress changes. It makes the fastest relief feel a lot like a good decision.

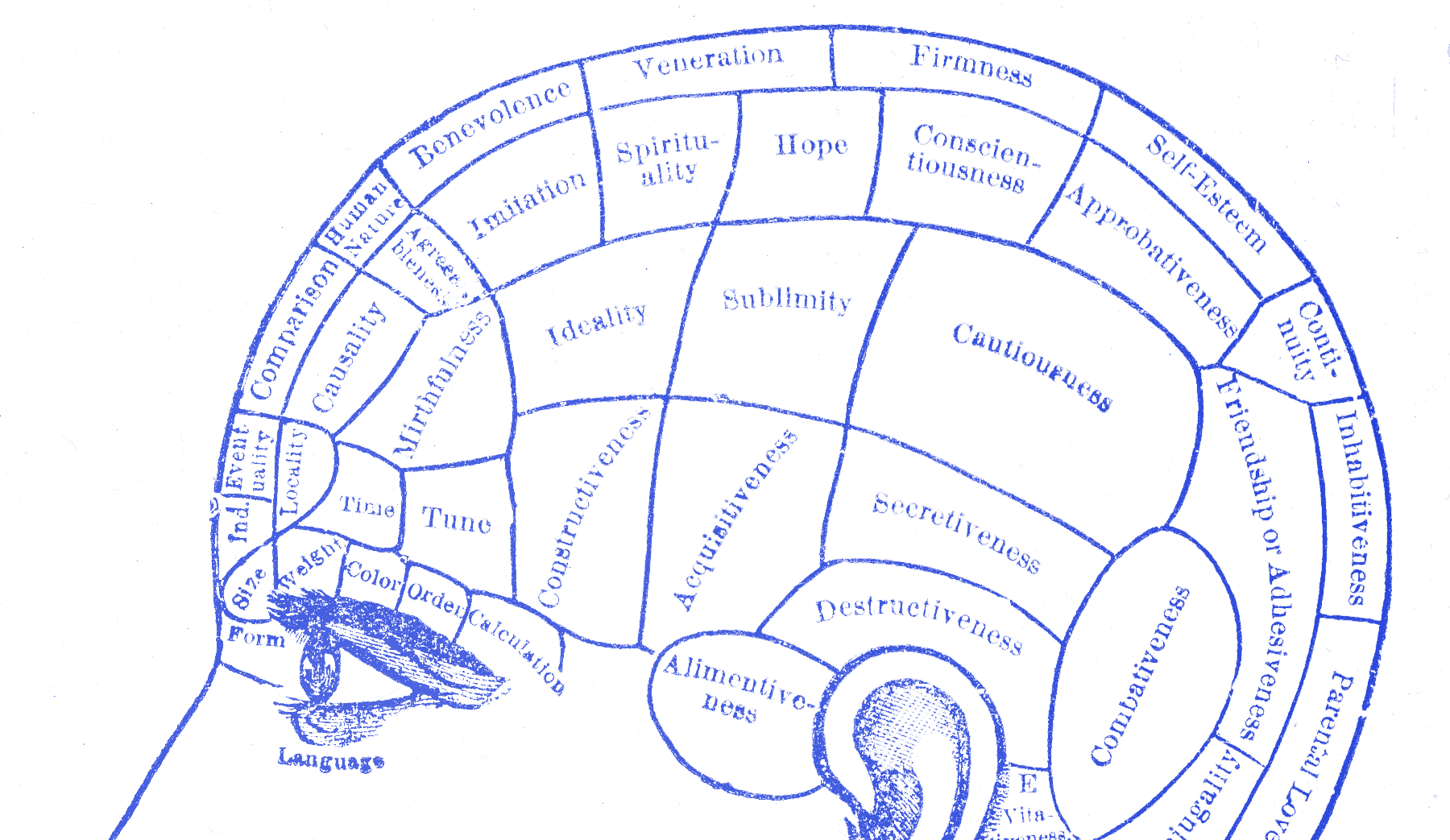

Stress narrows your view

When Robert Yerkes and John Dodson ran their 1908 experiments on learning and stress, they found something uncomfortable. Some pressure improved performance. But past a certain point, more pressure made things worse. Too little and nothing got done. Too much and thinking broke down. The useful zone was in the middle. Moderate stress helped. Extreme stress did not just stop helping. It made the work worse.

When you perceive threat, cortisol floods your system and your thinking narrows to the most immediate problem. That is useful if the threat is physical. It is much less useful when the threat is a deadline. Then you stop weighing options and start executing the first answer that makes the feeling go away. You are no longer thinking as broadly. You are trying to make the discomfort stop.

Design work needs wide thinking. You hold multiple options open, consider edge cases, question your own assumptions. Stress shrinks all of that. It turns a complex decision into a simpler one: ship or don’t ship. The nuance fades, and with it the judgment that might have caught the problem. A rushed team can mistake narrowed thinking for clarity because the room suddenly sounds more certain.

Barry Staw documented what this looks like at the organizational level. His 1981 research showed that when groups feel threatened, they pull decision-making upward, share less information, and fall back on what worked before. They stop experimenting. Nobody wants to be the person who slows things down. The engineers closest to the problem go quiet, because raising a concern under pressure feels like making things worse. Often, the people who know the most say the least at exactly the moment you need them. Later they say they had doubts all along.

NASA knew it was not ready

On the night of January 27, 1986, engineers at Morton Thiokol spent three hours trying to stop the Challenger from launching. The forecast for the next morning was -6°C (20°F). The rubber O-rings sealing the rocket booster joints had never been tested below 12°C (53°F). Cold makes rubber stiff. A stiff O-ring might not seal properly. If hot gas got past it, it would burn through the fuel tank. Roger Boisjoly , the O-ring specialist, told NASA the temperature was a problem and they should not fly.

NASA pushed back hard. Lawrence Mulloy asked where it said you couldn’t launch below 12°C (53°F). George Hardy said he was shocked by the recommendation. The pressure was enormous. Challenger had already been delayed five times. The launch was tied to the President’s State of the Union address. Morton Thiokol had an $800 million contract with NASA and a $10 million penalty for delays. The engineering team showed its data. NASA wanted proof it would fail, not just a worry that it might. Boisjoly had data and concern, but did not have certainty it would fail.

Jerry Mason, Morton Thiokol’s senior vice president, pulled his team aside and told Bob Lund, the VP of Engineering, to stop thinking like an engineer and start thinking like a manager. Lund changed his recommendation. Those engineers were overruled. The launch was approved.

Lund later testified to the Rogers Commission:

We had to prove to them that we weren’t ready, and so we got ourselves in the thought process that we were trying to find some way to prove to them it wouldn’t work.

— Robert Lund

The rules had flipped. The engineers were no longer trying to prove it was safe to launch. They were trying to prove it was dangerous, to people who had already decided to go. Challenger launched the next morning at 2°C (36°F). Seventy-three seconds later, all seven crew members were dead.

You have seen this before

A smaller version of this happens in product work all the time. You cut the usability test because the build is happening tomorrow. You skip the accessibility check because there’s no time to fix it anyway. You hear a concern from a developer and change the subject because dealing with it would mean pushing the demo. The concern does not go away. It just lands in someone else’s sprint.

The pressure makes these feel more reasonable than they are. Stress has narrowed your focus to the finish line and made everything else feel like a distraction. The concern feels inconvenient. I have seen that show up as a room full of people nodding while one person goes quiet, then Slack filling up with doubts after the meeting is over. Those are usually the warnings worth taking seriously. By then the room has already moved on, which is exactly why the problem survives.

When warning signs get ignored

The useful move here is one question, asked at the worst possible moment: what would it take to check this?

Not “should we delay?” Not “is this really a problem?” Those questions lead to rationalization. “What would it take to check this?” is concrete. It turns the concern into an estimate: an hour of testing, a day of investigation. Sometimes the answer is small enough to fit before the ship date. Sometimes it tells you the risk is real and you need to have the harder conversation. Either way, you gave the concern a fair hearing instead of burying it because the timing was bad. That is a better use of pressure than pretending pressure made the risk disappear.

The Challenger engineers had the information. They knew what they knew. Nobody at NASA asked what it would take to check their concern. The deadline made that question feel impossible. In practice, it was treated as impossible because the launch had already become more real than the warning.