You Are Not Listening

How confirmation bias filters feedback before it lands

TL;DR:

You run research to find truth. Usually you're running it to find permission. The three users who failed? Edge cases. The one who got through? Featured in the deck. That's not research. That's a highlight reel.

You ran the user test. You watched the sessions. You took notes. And somehow you came out of it feeling like the design was fine.

Three users couldn’t complete the flow, but one of them clearly hadn’t read the instructions, one wasn’t really your target demographic, and the third just seemed to be having a bad day. The one who got through? You wrote that up in detail. You quoted her. You put her in the summary deck.

That’s not research. That’s a ritual you performed over the evidence you already wanted.

What the brain does with inconvenient data

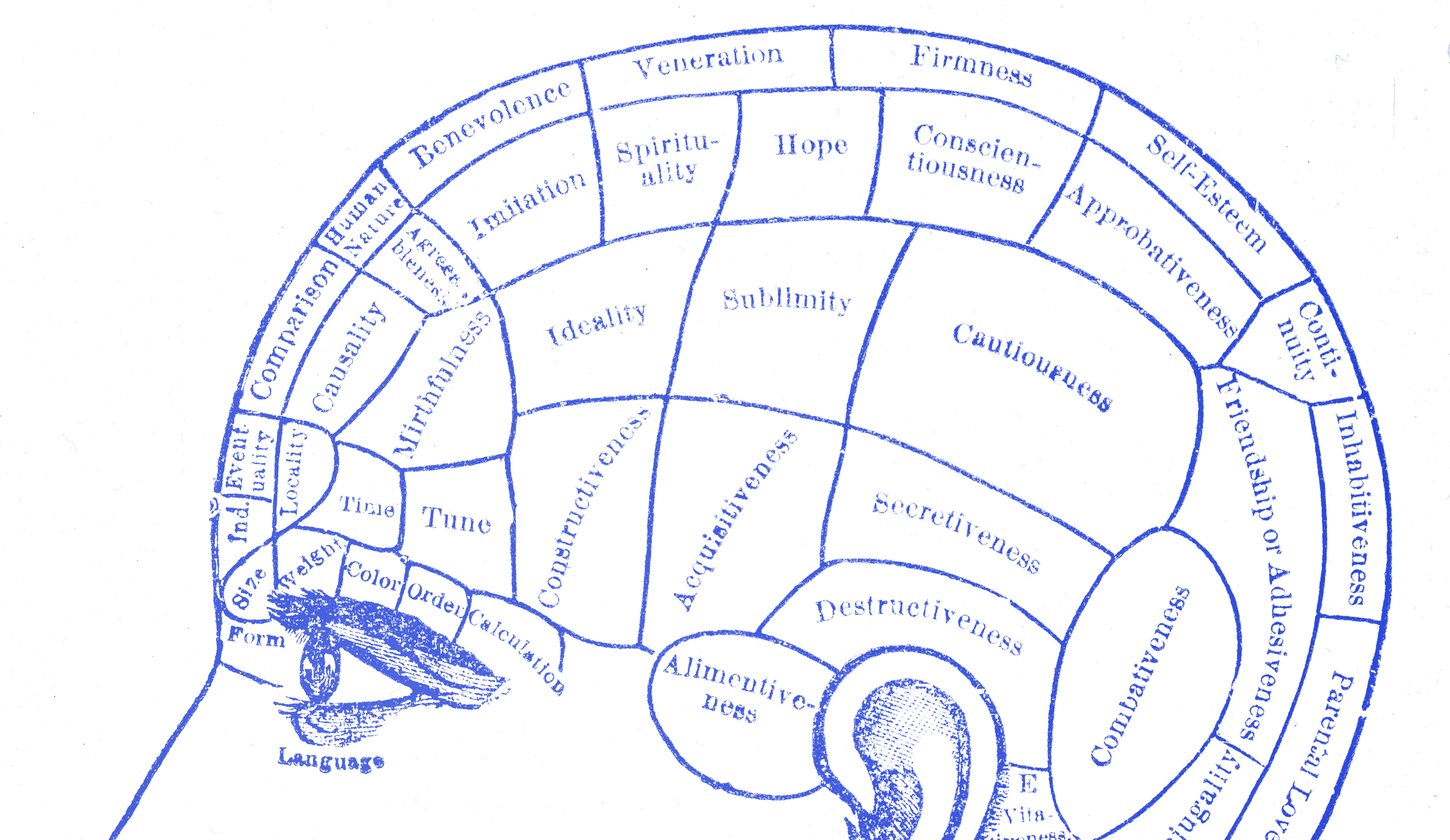

In 1960, Peter Wason ran a simple experiment. He showed participants a sequence of three numbers (2, 4, 6) and told them the sequence followed a rule. Their job was to discover the rule by generating their own sequences and getting yes-or-no feedback on whether each fit. Participants could test as many sequences as they liked before stating their hypothesis. Almost every participant generated sequences that confirmed their initial guess (doubling, adding two, even numbers) and almost none tested sequences designed to break it. Most stated their hypothesis with confidence after collecting only supporting evidence. Most were wrong. The rule was any three numbers in ascending order. The participants hadn’t been testing the rule. They had been confirming it.

Wason called this the confirmation bias, and in the decades since, Raymond Nickerson at Tufts produced the most thorough account of how it operates. Nickerson’s landmark 1998 review found that the bias runs across domains, levels of intelligence, and types of expertise. It is not a flaw in untrained thinking. It is a feature of how reasoning works. His conclusion is uncomfortable:

“Once one has taken a position on an issue, one’s primary purpose becomes that of defending or justifying that position.”

Nickerson

This is not a weakness people notice in themselves. That’s the mechanism. The bias doesn’t announce itself. It operates as selective attention, as charitable interpretation, as the quiet decision not to probe a finding that would make your life complicated. You don’t feel like you’re filtering. You feel like you’re evaluating.

The Segway team and the meaning of skepticism

Dean Kamen launched the Segway in 2001 with projections of 10,000 units a week. His team had convinced investors including John Doerr and Steve Jobs that the product would be bigger than the personal computer. When feedback came in about the price (at launch, $5,000), the regulatory barriers to riding on sidewalks, and the impracticality in most urban environments, the team’s response was consistent: they treated skepticism as a communication problem. If critics didn’t see the potential, it was because the vision hadn’t been explained well enough. In its first five years the product sold 30,000 units total. Ninebot acquired Segway Inc. in 2015, and production of the PT ended in June 2020 after fewer than a hundred thousand units across two decades, against projections of hundreds of thousands per year.

The team hadn’t been deluded. They had been invested. Investment and confirmation bias are close to the same thing in practice. The more you care about a design, the harder your brain works to protect it from contrary evidence. Every piece of feedback becomes a sorting problem: is this signal worth acting on, or noise that can be set aside? Confirmation bias doesn’t help you sort well. It helps you sort in favor of the conclusion you’ve already drawn.

The growth mindset is not a poster

Carol Dweck’s research on mindset offers a useful frame here, though not in the way it tends to get cited. Dweck found that people who treat ability as a fixed trait experience negative feedback as a threat to identity and tend to avoid situations where they might be proved wrong. People with a growth mindset treat negative feedback as information about what needs to change. The difference isn’t intelligence or skill. It is the underlying belief about what feedback is for.

For designers, the implication is structural. If you treat your design as a statement about your talent, feedback becomes a referendum on you and the bias runs hard. If you treat it as a working hypothesis, a guess that will be wrong in ways you haven’t found yet, the brain has less to defend. The design isn’t you. It’s a guess. Guesses are meant to be tested and broken.

This is not a pep talk. It is a process recommendation. The question before any research session is not “will users validate this?” It is “what would have to be true for this to be wrong?” If you can’t answer that second question, you’re not doing research. You’re doing theater.

Build the test that could break it

The shift confirmation bias requires is methodological, not attitudinal. Trying harder to stay open doesn’t work, because the bias operates below the level of intention. What works is designing research that makes discouraging evidence harder to dismiss before the session starts.

Before any usability test or feedback round, write down the three things that concern you most about the design. Not the things you think are fine. The things you are actually worried about. Then design your research to probe those. Watch for exactly those failure modes. If none of them show up, that’s genuine signal. If you hadn’t named them beforehand, you would have called the session a success and moved on. This is sometimes called a kill criterion: the condition that, if you find it, tells you the design needs to change. Name it before you look for it, or you will never find it.

The Wason task is instructive here. The participants who found the right answer tested sequences designed to return a no. They looked for the edge where the rule stopped holding. That’s the posture. Not skepticism toward your users, but skepticism toward your own conclusions.

You’re not listening if you only hear what you expected to hear.