You Are Not Listening

How confirmation bias filters feedback before it lands

TL;DR: Confirmation bias shapes what feedback you notice, trust, and explain away. If you do not decide in advance what would prove you wrong, research ends up backing what you already believed.

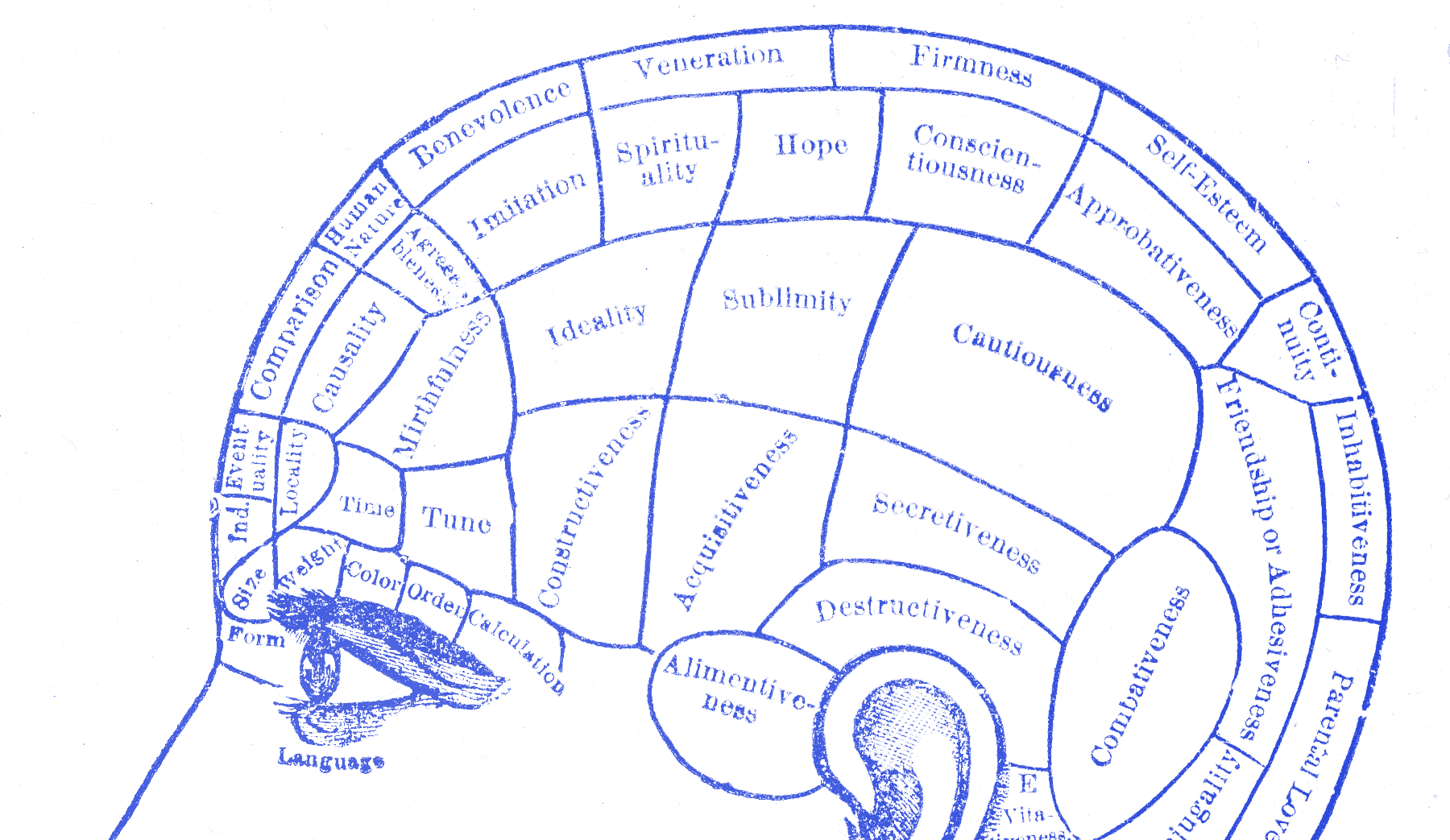

You can have the evidence right in front of you and still walk away with the answer you wanted. That is confirmation bias. You already had a theory in your head, and then you treated the research like something that was supposed to bring you back support for it.

You test the story you want to keep

In 1960, Peter Wason gave people the sequence 2, 4, 6 and asked them to figure out the rule. Most tried examples that matched what they already guessed. Very few tried examples that could break the rule. They were not really testing the idea. They were looking for support.

Raymond Nickerson reviewed decades of work on this and landed on the ugly part: “Once one has taken a position, one’s primary purpose becomes defending it.” That is the mechanism at work here. You do not scan feedback evenly. You lean toward what fits and hurry past what does not.

That is why a room full of notes can still produce a dishonest conclusion. The bias sits in what you notice, what you trust, and what you explain away.

It also sits in what gets written down with energy. Designers often record the confirming quote in full and summarize the contradictory one in two words. “Edge case.” “Confused user.” “Needs onboarding.” The sorting starts before synthesis ever becomes a slide.

Segway kept reading the warning wrong

When Segway launched in 2001, the story around it was huge. It was supposed to remake urban movement. When people pushed back on the $5,000 price, the sidewalk rules, and the fact that most cities were not built for it, the answer often sounded similar: people did not understand it yet.

That is what makes the example useful. Negative signal kept arriving, and it kept getting translated into the wrong diagnosis. Not “the product does not fit the world well enough,” but something closer to “the world has not caught up.” In its first five years it sold 30,000 units total. Production ended in 2020 after fewer than a hundred thousand units across two decades.

That is confirmation bias in product form. The warning does not disappear. It gets reread until it supports the story you already wanted.

That move shows up in smaller product work all the time. A failed test becomes a messaging problem. Low conversion becomes an education problem. Weak adoption becomes a discoverability problem. Sometimes that is true. Sometimes it is just a nicer story than “the thing itself is not landing.”

You can hear the difference if you listen to the verbs. Learning language sounds like “what happened there” and “why did they stop.” Defensive language sounds like “they didn’t get it” and “we need to explain it better.” One set of questions opens the problem. The other closes it before you even looked at it.

Why good intentions do not fix it

Carol Dweck is useful here for a narrower reason. If you treat testing as a pass-fail verdict on whether you were right, the bias gets stronger. If you treat testing as a search for what breaks, you have a better chance of seeing the bad signal before you edit it into something nicer.

That is the split I care about. It is not really about ego here. It is about procedure. Are you running the session to learn, or are you running it to feel safe shipping?

I have watched designers quote the one participant who got through and explain away the three who did not. Nothing dramatic. No lying. Just selective seriousness.

What to write down before the test

Write down what would prove you wrong before the research starts. That is the move. Not “keep an open mind.” Put the failure condition on paper. What finding would make you change direction? What behavior would tell you the current approach is weak? If you do not define that first, the session will turn into a pile of impressions you can sort any way you like afterward.

The people who solved Wason’s problem tried sequences that could fail. That is the right instinct here too. Do not just collect support for the design. Go looking for the point where the design breaks.

Do the same thing in synthesis. Give one person the job of arguing that the current direction should not ship. Not because they already believe that. Because the room needs at least one person trying to make the contradictory evidence hold its shape.

And when you review notes, mark the surprises first. Not the quotes that fit the current story best. The moments that made the room go quiet. The participant who stopped where nobody expected. The step that looked safe in Figma and turned messy in motion. Those are the parts most likely to get flattened if nobody protects them. They are also usually the parts that tell you where the work is actually weak.

You are not listening if the evidence only counts when it agrees with you.