Affordances Signal Action

How interfaces communicate what's possible

TL;DR:

Affordances are invisible facts. Signifiers are visible clues. When you strip away shadows, borders, and depth cues to make interfaces cleaner, you're removing the only signals telling users where to tap.

The Year We Threw Away the Signals

Around 2013, the design industry decided that visual cues were ugly. Shadows went. Borders went. Raised buttons went. Gradients, depth, texture, every hint that one element sat above another: gone. Screens got flat and clean and beautiful. Designers celebrated. Users started tapping the wrong things.

This was not a coincidence. Apple shipped iOS 7 that year and stripped six years of visual signals from the iPhone interface in one release. Windows 8 had done the same thing twelve months earlier. The whole industry followed, because the whole industry watches Apple. The reasoning sounded right: users had learned to use touchscreens, they no longer needed training wheels, the interface could grow up. What nobody asked was whether those shadows and borders were training wheels at all, or whether they were the only thing telling users where to tap. Flat design as an aesthetic survived. The idea that you can remove every visual signal and still have a usable interface did not. The reason has a name, and most designers are still getting it wrong.

Two Things Designers Keep Mixing Up

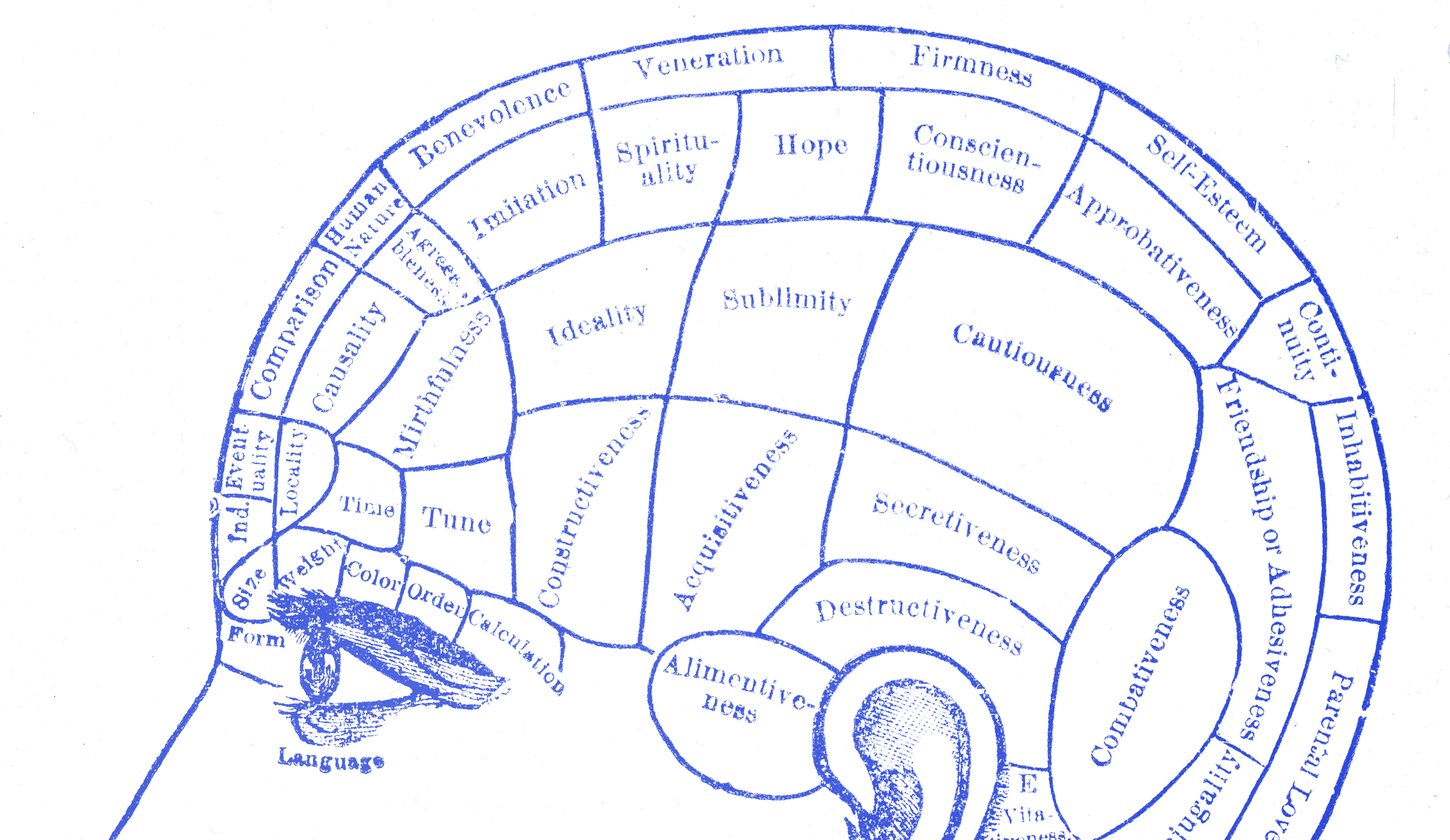

In 1979, the psychologist James Gibson introduced the idea of affordances in his book The Ecological Approach to Visual Perception. An affordance, for Gibson, was a relationship between an object and an agent. A chair affords sitting. A handle affords gripping. A surface affords walking on. The key thing Gibson said, the thing designers keep forgetting, is that affordances exist whether you can see them or not. They are facts about the world, not signals. A door affords pushing whether it has a handle, a flat plate, or nothing at all. Don Norman brought affordances into design in 1988, in The Psychology of Everyday Things. The design world loved the concept and ran with it. There was one problem: they misunderstood it, and Norman admits he caused some of that confusion himself. By 1999, he was walking it back. By 2013, he had replaced the term with something more useful. In the revised edition of The Design of Everyday Things, he wrote that “signifiers specify how people discover those possibilities: signifiers are signs, perceptible signals of what can be done.” Then he went further: designers should forget about affordances and focus on signifiers instead. That sentence is the chapter. Affordances are invisible facts. Signifiers are visible clues. Norman’s conclusion, after decades of watching designers use his term wrong, was that signifiers are what matter to designers. Affordances exist whether you communicate them or not. Signifiers are how users find them. When a button looks like a button, the signifier is doing its job. When a button looks like a heading, the signifier is gone, and the user is standing at the door, unsure whether to push or pull. Every time a designer says “I’m going to add an affordance here,” which is something designers say in meetings, they almost always mean they are adding a signifier. The word they reach for is wrong. This is not a nitpick. The distinction matters because it explains what goes wrong when design gets too clean.

What the Evidence Showed

The Nielsen Norman Group published a formal usability appraisal of iOS 7 and found what anyone watching real user behavior would have expected. Users had trouble telling interactive elements apart from decorative ones. Buttons that looked like text went unnoticed. Apps that embraced the flat aesthetic produced interfaces where the call to action was, in NNG’s words, “hard to say.” Support forums filled with questions about how to do things users had done for years. The affordances had not changed. Tapping a button still worked the same way. Apple had stripped the signifiers. The possibilities were still there. Users just couldn’t see them. NNG noted that Windows 8 had created the same problem a year earlier, and the pattern was clear: when interfaces remove visual cues in pursuit of a cleaner aesthetic, users pay the cognitive price. They hesitate. They tap wrong. They give up. Apple corrected course in later iOS releases, restoring depth cues and contrast signals that made interactive elements readable again. The flat design survived. The wholesale removal of signifiers did not. This is what happens when designers optimize for how something looks rather than what it communicates. The interface gets beautiful and the user gets lost.

The Silent Walk

There is a test for this, and it costs nothing. Call it the silent usability walk. Put your interface in front of someone who didn’t build it. Don’t say anything. Don’t point. Don’t explain. Just watch. Every moment of hesitation is a missing signifier. Every wrong tap is a false one, something that looked interactive and wasn’t, or looked static and was. Every time the person moves their finger toward something and then stops, something failed. Not their brain. Your design. The point of watching without speaking is this: the moment you open your mouth, you are compensating for what the interface doesn’t communicate on its own. Designers do this in usability sessions without noticing. “Oh, that button is up there.” “Yeah, you have to scroll down first.” Every one of those corrections is a confession. Something your product should have said didn’t get said. Gibson’s chairs and Norman’s doors translate to this. The action is possible, the affordance exists. The question is whether the user can discover it. That is the signifier’s job. When you run the silent walk and nobody hesitates, the signifiers are working. When you find yourself wanting to lean forward and point, they are not.

A Locked Door With a Beautiful Finish

The cleaner you make an interface look, the more deliberate you have to be about what you leave in. Every shadow you remove is a depth cue. Every border you drop is a boundary. Every gradient you flatten is a surface that no longer looks pressable. Most of those things can go. Some of them cannot, and knowing the difference requires watching real people use the thing. Affordances take care of themselves. The action is always possible. Signifiers need your attention. They are the only thing standing between a user who knows what to do and a user who doesn’t. A clean screen that nobody can use is just a locked door with a beautiful finish.