Research as Alibi

How motivated reasoning turns research into confirmation

TL;DR:

You don't always run research to find out what users think. Sometimes you run it to justify what you've already decided. The screener, the questions, the synthesis, each quietly steers the outcome. That's not research. That's expensive decoration for a conclusion from the kickoff meeting.

You tell yourself you run research to find out what users want. That’s not always what’s happening. Sometimes the question is already answered before the study starts, and the research exists to confirm it with enough ceremony that nobody pushes back in the meeting. The screener filters out the wrong respondents. The interview questions lead toward the preferred finding. The synthesis groups the data until the story fits. You don’t lie. You just stop looking when you’ve found what you needed.

This is not cynicism. It’s psychology.

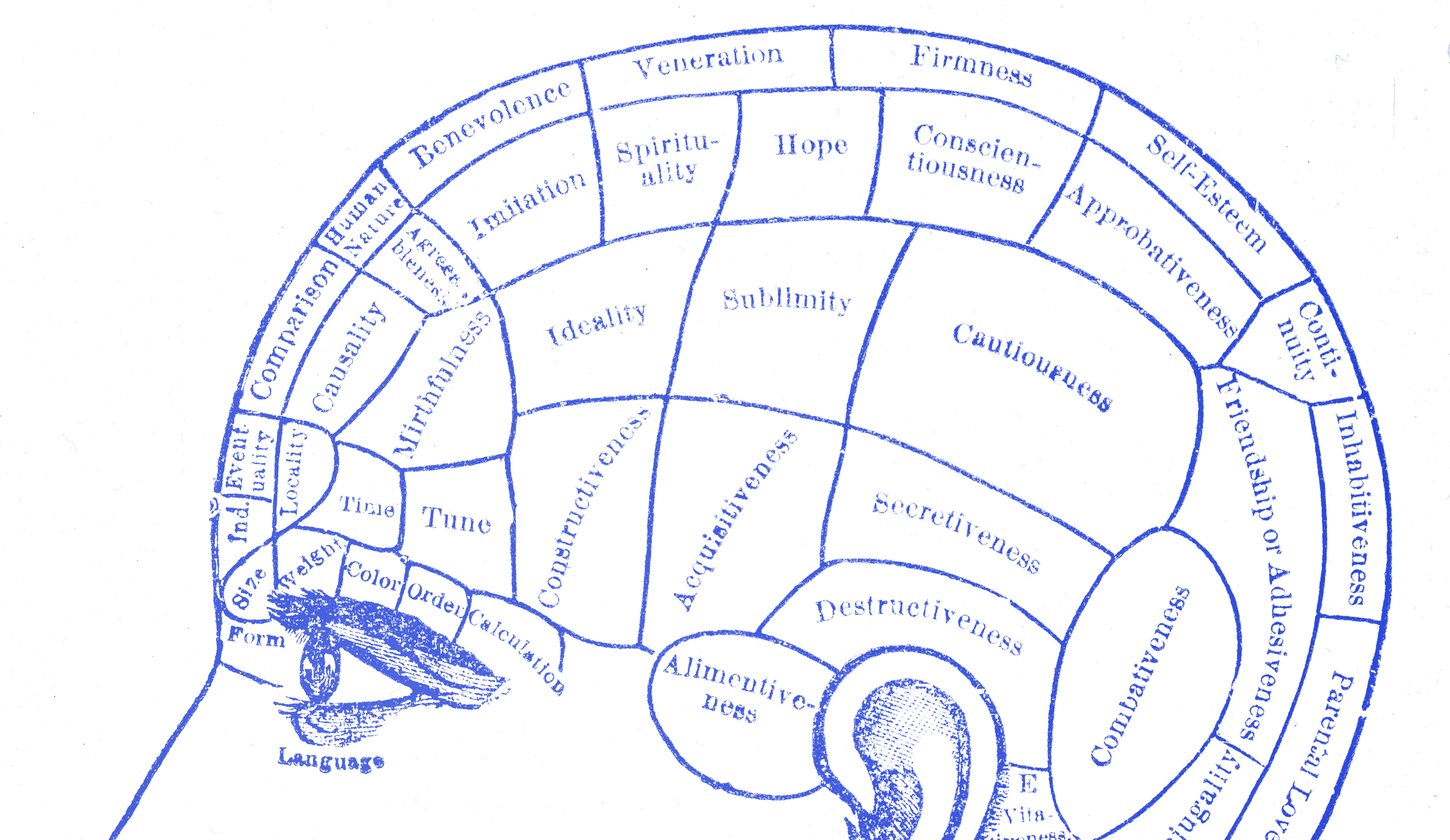

The brain that won’t let you lose

The mechanism has a name: motivated reasoning. Psychologist Dan Kahan, whose research at Yale Law School has studied how smart people arrive at wrong conclusions on matters of fact, defines it in plain terms. Motivated reasoning is

“the tendency of people to conform assessments of information to some goal or end extrinsic to accuracy.”

Dan Kahan

The goal extrinsic to accuracy, in design, is almost always the product you’ve already built or the direction your team has already committed to. The research doesn’t lead you to the conclusion. The conclusion leads you to run research until something confirms it.

Raymond Nickerson’s sweeping 1998 review of confirmation bias across dozens of fields shows that the problem is not that people are dumb or dishonest. It’s that the brain filters information at the point of intake. Evidence that supports a held belief passes through without friction. Evidence that threatens it gets examined with more scrutiny, questioned harder, and explained away with more effort. In one experiment by Peter Ditto and David Lopez, participants asked to evaluate an unfavorable medical test result demanded more evidence before accepting it than participants who received a favorable result. Same data. Different willingness to believe. The asymmetry is automatic, not deliberate.

You don’t need to want a particular research outcome to corrupt the process. You just need to care about the thing you’re researching.

What research corruption actually looks like

It doesn’t look like fraud. It looks like reasonable choices, made one at a time.

You recruit users who are “engaged with the product category,” meaning users already interested in products like yours. You write interview questions that ask about pain points in existing solutions, because your product solves those pain points. You run usability tests with participants who represent your imagined customer, not the full market. In the debrief, you note three quotes that validate the direction and file the two contradictory ones under “edge cases.” Every decision sounds professional. None of them are neutral.

The Lovallo and Kahneman HBR analysis of executive optimism in project planning shows the same pattern at organizational scale. Leaders commission research, but frame it around the question “how do we execute this?” rather than “should we execute this?” The research becomes operational, a tool for implementation, not evaluation. The harder question, the one that might produce an unwanted answer, never gets asked.

The Google+ case

Google launched Google+ in June 2011 on the back of internal research. Users wanted more control over how they shared content online. That much was real. But the team had already concluded what the solution should be: a social network that organized contacts into “Circles,” in direct competition with Facebook. The research supported the diagnosis. It said nothing reliable about whether a new Google social network was what people actually wanted or needed.

The result was a product that addressed a real finding on paper but missed the actual situation. People didn’t just want better privacy controls. They had no reason to leave Facebook, build a second social graph from scratch, and learn a new system. ComScore estimated that in January 2012, the average Google+ user spent about 3.3 minutes on the platform per month. Facebook users averaged over seven hours. The data showed something had gone wrong from the start. The team had studied a problem, decided on an answer, then gathered evidence in its favor. Google+ was shut down for consumers in April 2019. It never built a sustainable daily user base.

The one question that tells you if your research is real

There’s a simple diagnostic. Before you run a study, write down what findings would cause you to cancel the project or change direction. Be specific. Not “significant usability issues,” which is vague enough to explain away. Something concrete: “If fewer than 60% of users can complete the core task without help, we rethink the flow.” Or: “If users don’t identify this problem as meaningful in their lives, we stop.”

If you can’t write that sentence, you’re not running research. You’re running documentation.

This matters because the falsification criteria force a reckoning with what the research is actually for. Most teams skip this step not from dishonesty but because nobody asks the question out loud. The study gets designed around execution assumptions: which participants to recruit, which tasks to observe, which metrics to track, without ever specifying what outcome would change the course of the project. That omission is where the alibi gets built.

Jakob Nielsen and others have written at length about this pattern: the difference between testing to learn and testing to validate is a difference in intent that shapes every methodological decision downstream. But intent is hard to police. The practical move is to write the falsification criteria first, before you design the study, while you still don’t know the outcome. Treat it as a commitment device. If the results don’t meet the threshold, you agreed in advance that the threshold mattered. It’s harder to rationalize your way past a number you wrote down last week.

The real alibi

Research as alibi doesn’t just waste time and money. It insulates bad decisions from correction. The people in the room who had doubts see the research slide and go quiet. The stakeholder who wasn’t sure gets outvoted by data. The designer who spotted the real problem three weeks ago never gets the opening to name it, because the research has already spoken. The alibi doesn’t just protect the idea. It shuts down the conversation that might have saved the product.

Good research is supposed to be uncomfortable. It’s supposed to find things you didn’t already know. If your research never surprises you, it isn’t research. It’s a paper trail.